LiteLLM or Coder AI Bridge? Why Many Teams Use Both

As enterprises scale AI across their development organizations, choosing the right AI gateway architecture is one of the most consequential infrastructure decisions they'll make.

While LiteLLM and Coder AI Bridge both serve as AI Gateways, we often see many enterprises deploying them together to solve different problems in different areas of their stack. In this post, we’ll dig into the value, applications and comparisons of the two.

What are AI Gateways

Similar to how an API gateway works, AI gateways sit between applications and model providers, giving teams a single place to manage how AI is accessed. They handle routing, credential management, and usage tracking across different models.

AI gateways are typically introduced as usage scales across teams and applications, when organizations need to control costs, enforce security guardrails, and gain visibility into how AI is being used. Instead of every service managing its own integration and credentials, traffic is routed through the gateway so access can be centrally managed.

Introduction to LiteLLM

LiteLLM was released in 2023 and has quickly become one of the most widely adopted open-source AI Gateways, already accumulating over 39,000 GitHub stars.

As a commonly deployed open-source LLM gateway and proxy, the project standardizes calls to AI providers under a single interface. In many architectures, LiteLLM becomes the corporate AI gateway for applications across the organization. Services, internal tools, and backend systems all send their AI requests through it. This allows admins to enforce consistent rules for AI usage across many systems.

The core access model revolves around virtual keys assigned to services or teams. For application workloads, this works well. For hundreds of developers using coding agents, the operational model shifts: you need a provisioning process for each developer, a rotation cadence, and a way to answer the questions such as which person on my team spent what, on which model, this month?

In developer-heavy environments, the gateway is no longer serving only backend services. Developer tools, AI agents, and IDE extensions start generating large volumes of requests. Without identity-aware attribution, usage is often tied to a shared key, which makes it harder to understand how AI resources are being consumed across engineering teams.

LiteLLM typically operates at the service or API layer, not at the level of individual developer environments.

What Coder AI Bridge brings

Coder AI Bridge is designed for working within the developer environment by a single user or agent identity, not for powering inference on AI applications. It acts like a secure gateway between developer tools and LLM providers, allowing:

- Centralized management of credentials used by developer environments

- Governance over how developer tools access AI providers

- Routing AI requests from IDE extensions or agents

- Keeping credentials and traffic inside the platform

In many environments today, developers access AI services using a single shared LiteLLM key. That makes it difficult to understand who is responsible for usage or to prevent one user from consuming a disproportionate amount of tokens. AI Bridge introduces an identity-aware layer to help address that problem. Instead of relying on a shared API key for an AI gateway, AI Bridge ties AI requests to the authenticated Coder user and workspace. This allows organizations to centralize AI activity logging, integrate with existing observability systems, and attribute AI usage to developer identities and groups.

Because requests originate inside authenticated workspaces, the platform can associate those requests with a specific user session, workspace, and development environment. This makes it possible to analyze usage patterns across developers, teams, or time ranges using existing monitoring or logging infrastructure. These events can then be exported to existing logging or observability systems for analysis.

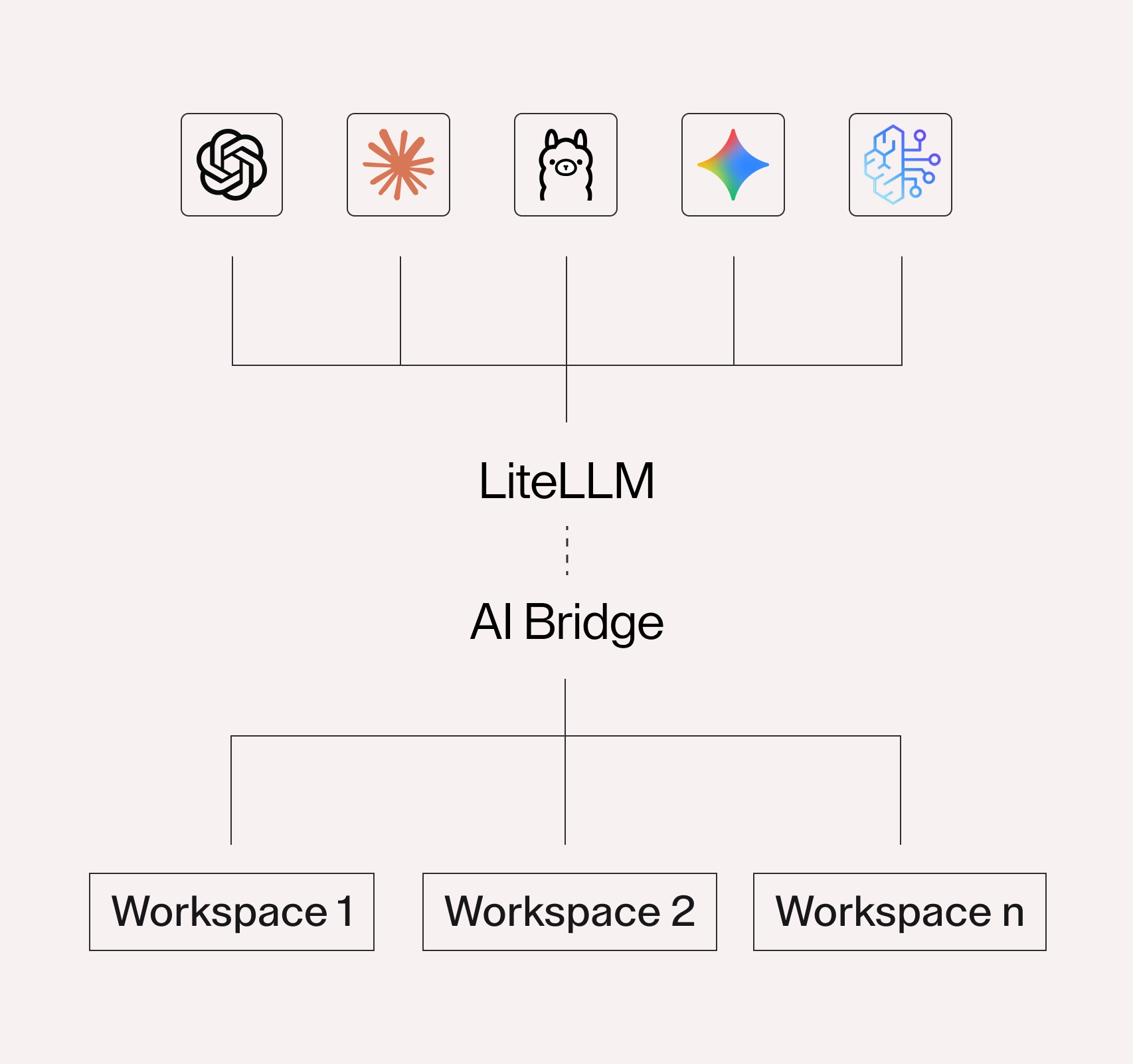

Requests originate from developer workspaces and pass through AI Bridge, where they can be associated with the user and observed or governed through external systems and policies.

Here's a bird's eye view comparing LiteLLM and Coder AI Bridge:

| Aspect | LiteLLM | Coder AI Bridge |

|---|---|---|

| Primary role | Corporate LLM gateway for routing and managing AI access across applications | AI integration layer for enabling and governing AI usage within developer workspaces |

| Scope | Any application calling LLM APIs | Workspace developer tools (VS Code, AI agents, CLI, etc.) |

| Deployment | Self-hosted Python service or SDK | Built into Coder platform |

| Model provider | Routes to 100+ providers (e.g. OpenAI, Anthropic, Bedrock, etc.) | Connects developer environments to upstream AI providers or gateways (e.g. OpenAI, Anthropic, Bedrock) |

| API format | Normalizes APIs to OpenAI format | Designed around developer tools and workspace integrations |

| Identity model | Service-level (API keys / virtual keys) | User and workspace-level attribution |

| Governance | Proxy-level budgets, routing, and guardrails | Identity-based attribution and controls via Coder workspaces |

| Typical consumer | Applications and developers calling LLM APIs | Developers using AI tools inside Coder workspaces |

| Primary owner | Central AI / Infrastructure / IT team | Platform Engineering / Developer Productivity team |

How teams combine LiteLLM and AI Bridge

To get the best of both worlds, teams stack LiteLLM and AI Bridge together. LiteLLM sits at the corporate gateway layer, handling AI access for all consumers: applications, internal tools, automated pipelines, and developers alike. It enforces organizational guardrails, manages provider credentials at the enterprise level, and serves as the centralized connection point to inference providers like AWS Bedrock.

Coder AI Bridge sits at the developer layer, downstream of LiteLLM. Developer traffic flows through Bridge first, where it's attributed to a named user, attributed to a named user and can be monitored for usage, and logged through Coder and exportable to external observability systems, then continues upstream through LiteLLM to the provider.

AI Bridge handles workspace governance, while LiteLLM handles multi-model routing

These tools solve different layers of the stack:

- Coder workspaces manage developer environments.

- AI Bridge connects developer identity to AI usage.

- LiteLLM handles model routing and gateway functionality.

This separation of responsibilities makes the system easier to evolve over time. For example, some teams may replace their LLM proxy in the future or introduce additional routing layers. Because AI Bridge sits at the developer boundary, the developer experience remains stable even as the backend infrastructure changes.

Further, it also allows admins to adjust AI routing strategies or model providers without requiring changes to developer tooling inside the workspace.

Bottomline

Both LiteLLM and AI Bridge help simplify and manage access to AI models but follow different approaches. LiteLLM excels at model routing and gateway abstraction. AI Bridge ties developer identity to governance, providing deeper visibility into usage and attribution. They operate at different layers of the stack, addressing application-level and developer-level concerns respectively. When these components are combined, teams gain both flexibility and control.

We’re continuously adding new capabilities to AI Bridge. If you’d like to see it in action, take a test drive: https://coder.com/trial.

Related resources

Subscribe to our newsletter

Want to stay up to date on all things Coder? Subscribe to our monthly newsletter for the latest articles, workshops, events, and announcements.