On March 31, the latest version of Anthropic's Claude Code shipped to the npm registry with a source map file that should have been excluded from the package. Within hours, more than 500,000 lines of proprietary source code were mirrored across GitHub, X, Reddit, and decentralized repositories. Anthropic responded quickly, confirming this was "caused by human error, not a security breach," and no customer data or credentials were exposed.

The root cause was a missing exclusion rule in a build configuration file. A simple oversight in a packaging step - something any engineering team can make - turned what should have been a routine release into front-page news. The Claude Code incident was not an isolated event. It was one in a pattern that compressed months of supply chain exposure into days.

That is the reality of software supply chains in 2026: even the best teams that ship at the pace modern development demands face this risk.

A cascade, not a coincidence

The timeline is worth laying out in full, because the density matters.

First, Trivy disclosed a critical vulnerability in its container scanning tool. Days later, Trivy was hit again through a GitHub Actions compromise that widened the blast radius. LiteLLM was caught in the fallout, issuing its own security update as a downstream dependency. Then the Claude Code source map leaked. Within hours, axios, one of npm's most downloaded packages, was compromised with malicious versions delivering a remote access trojan. Attackers then immediately began squatting Anthropic's internal package names on npm, targeting developers attempting to compile the leaked source.

Five distinct supply chain incidents in roughly two weeks. Each one exploited a different vector: a vulnerability scanner, a CI/CD pipeline, a dependency chain, a build configuration error, and package name squatting. The common thread is that every one of them targets the software supply chain, the layer of tooling, packages, and infrastructure that sits between your code and production.

Why the Claude Code incident matters beyond Anthropic

The specifics of the Claude Code incident illustrate how vulnerable even the most rigorous engineering organizations can be. Bun, the bundler Anthropic uses, generates source maps by default. A single missing exclusion rule in a configuration file led to one of the most significant accidental code exposures in recent AI history.

As development velocity increases and as AI agents take on more of the build and release workflow, the surface area for human oversight gaps grows with it. Every organization shipping software at scale faces some version of this risk. The question is whether a single missed configuration step can cascade into a material exposure.

The package squatting that followed the incident makes the downstream risk concrete. Attackers registered npm packages mimicking Anthropic's internal tooling, targeting developers exploring the leaked source. That is a supply chain attack built on top of an accidental exposure, and it happened within 24 hours.

The infrastructure layer is the control surface

The instinct after a wave of incidents like this is to lock things down. Restrict internet access. Block package installations. Pull AI coding agents off developer machines entirely. That approach trades one problem for another: you eliminate supply chain risk by eliminating the ability for engineers to do their work.

The alternative is to move the security perimeter from the endpoint to the infrastructure. Instead of securing every developer laptop and hoping nobody installs a compromised package, you run development workloads inside isolated environments where the blast radius is contained by design.

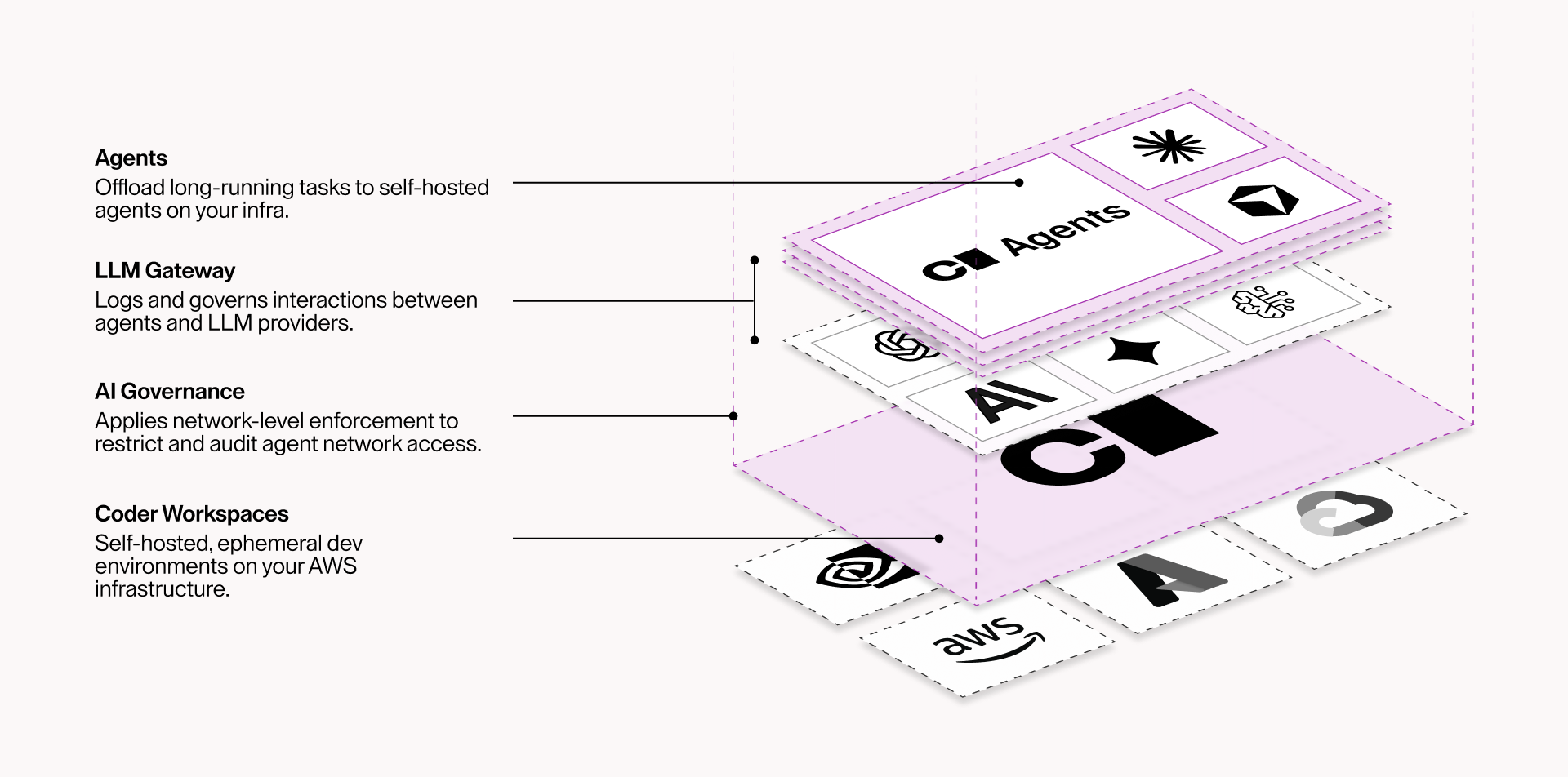

This is the architectural principle behind cloud development environments. When a developer or an AI agent operates inside an isolated workspace, a compromised dependency cannot reach the corporate network, exfiltrate credentials, or pivot to production systems. The workspace boundary enforces containment automatically, regardless of what runs inside it. Kubernetes, Firecracker micro-VMs, full VMs, physical hosts: the isolation mechanism is configurable, but the principle is consistent. The environment is the perimeter.

This is not a theoretical defense model. When the Shai-Hulud 2.0 supply chain attack compromised hundreds of npm packages and over 25,000 GitHub repositories in late 2025, the organizations that recovered fastest were the ones that had already separated developer environments from developer endpoints. They could rebuild, rotate credentials, and resume work in hours while others spent weeks auditing distributed machines. The same architectural controls that contained Shai-Hulud apply directly to the incidents unfolding this week.

Centralized tooling management addresses the Claude Code scenario directly. When workspace templates define which versions of AI tools ship pre-installed and verified, developers do not need to pull agents from registries themselves. Environment configuration becomes code, not a policy document that relies on human compliance.

Network boundaries add the final layer. The axios compromise and the package squatting incidents both depend on malicious code phoning home, which requires outbound network access.

AI Governance enforces network isolation at the process level, and workspace-level network controls provide additional defense. Administrators define exactly which domains an agent can reach; everything else is blocked by default. Instead of giving agents unrestricted internet access, you route model traffic through a controlled gateway and restrict all other outbound communication. The exfiltration path is closed.

What this week should change about your security posture

The pattern from the past two weeks is not going away. Supply chain attacks are increasing in frequency and sophistication, because the economics favor attackers: compromise one widely-used package, and you reach thousands of organizations simultaneously. AI coding agents amplify this dynamic.

The organizations that weather this well will be the ones that treat their development environment as a governed infrastructure layer, not as a collection of individual machines running whatever tools each developer prefers. By combining Isolated workspaces, centralized tooling management, and network boundaries they can safely scale AI.

The response to a week like this one should not be pulling AI tools away from your engineering team. It should be putting the infrastructure in place to run them safely. Start with Coder's approach to secure AI development infrastructure.

Subscribe to our newsletter

Want to stay up to date on all things Coder? Subscribe to our monthly newsletter for the latest articles, workshops, events, and announcements.