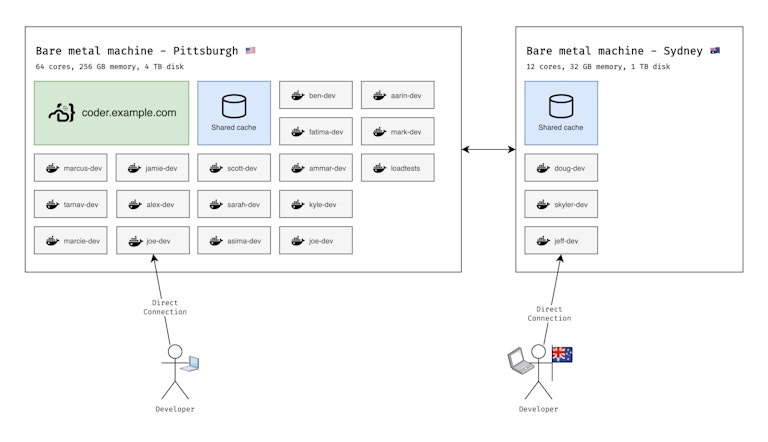

How our development team shares one giant bare metal machine

Well, we actually develop on two machines. When our Australian coworkers found out, they wanted one too.

First, let's explain how we landed here. Over the past few years, we've tried a few different solutions for development environments.

Local environments

We’re building a remote development platform, so local development wouldn’t be a good choice for us. Awkward. Besides, we don’t want new engineers to wait for equipment or manually set up their environments before they can start at work.

Engineers at Coder use Windows machines, Linux desktops, and MacBooks (x86 and m1). We’re a small company and don’t want to maintain lengthy wikis with instructions for each platform.

More importantly, we don’t want to be stuck troubleshooting dev environments instead of working on customer-facing features.

Kubernetes environments

Our first product, Coder v1, uses Kubernetes to create remote development environments. This allowed us to onboard engineers quickly. Through web IDEs or SSH, engineers develop using powerful cloud compute (gigabit network, 16 cores, 32 GB RAM). Some were even developing via an iPad.

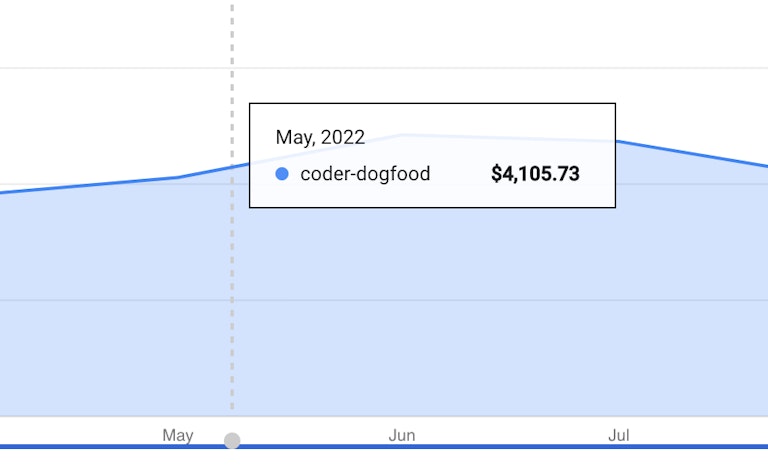

After moving our development to Kubernetes, we pretty much spent zero time troubleshooting our environments. However, we spent a lot of time trying to tune our clusters and keep our cloud bill down. We maintained separate clusters in the US, Brazil, and Australia so international employees could develop with low latency. Apart from the compute costs, Google charges ~$72/cluster/mo which gets quite pricey across regions and demo/staging/production environments.

Kubernetes is awesome and we still recommend enterprises use Coder v2 with Kubernetes but, as a small startup, it was overkill for us. Kubernetes was cheaper than giving each developer a beefy VM, but not by much.

Today: Docker environments on a bare metal machine

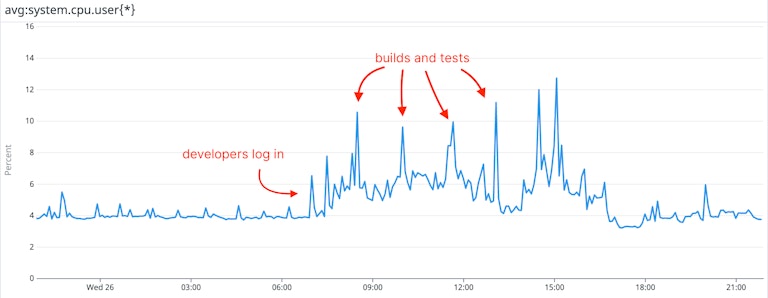

Our second iteration, Coder v2, gave us a bit more flexibility. We rent a 32-core bare metal machine from TeraSwitch for $400/mo. Typically, the machine is underutilized. However, workspaces can burst to leverage all resources for expensive tasks.

Here are some benchmarks:

| TeraSwitch Box | GKE Cluster | Local machine (2019 Macbook Pro) | |

|---|---|---|---|

| Network speed test | 2.5 gigabit | 1.1 gigabit | 100 megabit (simulated) |

| Workspace start time | 5s | 3m 39s | 8m 45s (nix-shell) |

| Build (coder/coder) | 1m 4s | 1 min 34 seconds | 2m 8s |

| Test suite (coder) | 18s | 47s | 1m 56s |

| git clone (kubernetes/kubernetes) | 44s | 56s | 3m 24s |

| Build (kubernetes/kubernetes) | 2m 12s | 6m 55s | 7m 8s |

| Experience | 😎 Cool | 🙂 Nice | 🤷 Meh |

How we did it

- We installed Coder on the primary machine.

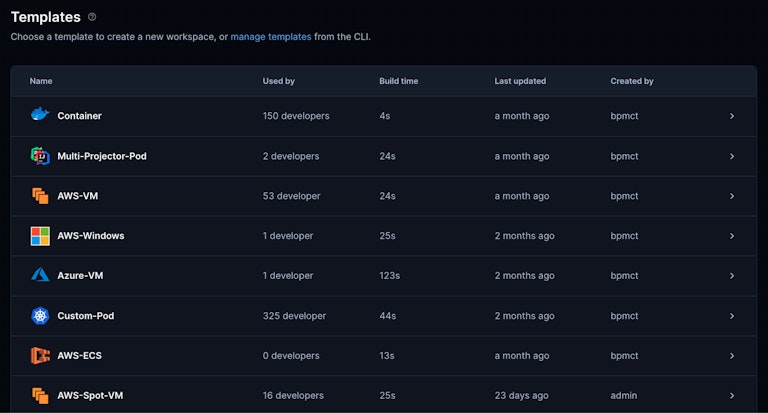

- We wrote a Coder template with Terraform. It that gives each developer a container using the sysbox-runc runtime (for docker-in-docker).

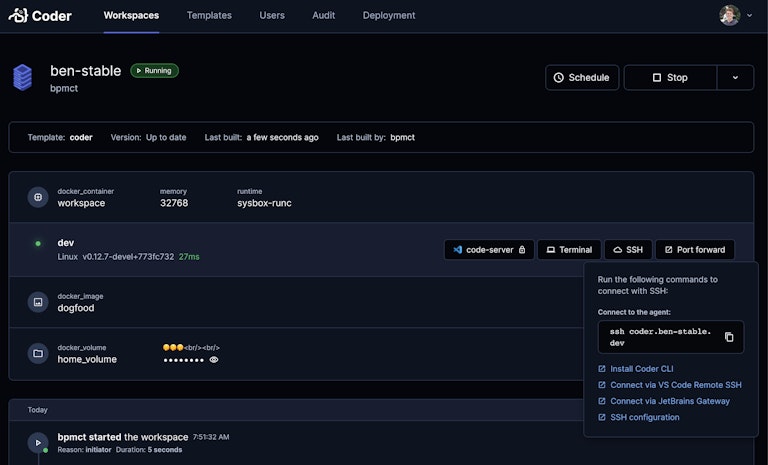

- Our engineers log in, create a workspace, and connect over HTTPS (code-server) or SSH (VS Code Remote, JetBrains Gateway).

- Admins can push updates to the template anytime. Developers are notified when a new template version is out.

Oh, and we set up another machine + template for our Australian coworkers. Since Coder’s networking supports peer-to-peer connections, users connect directly with no added latency.

Coder is free and open source

Whether you’re a tinkerer, small startup, or (especially 😉) an enterprise with 1000+ developers, we think you’ll like Coder.

Developers connect with familiar interfaces (such as VS Code Remote SSH) and admins can manage workspace templates with familiar infrastructure tools (such as Terraform).

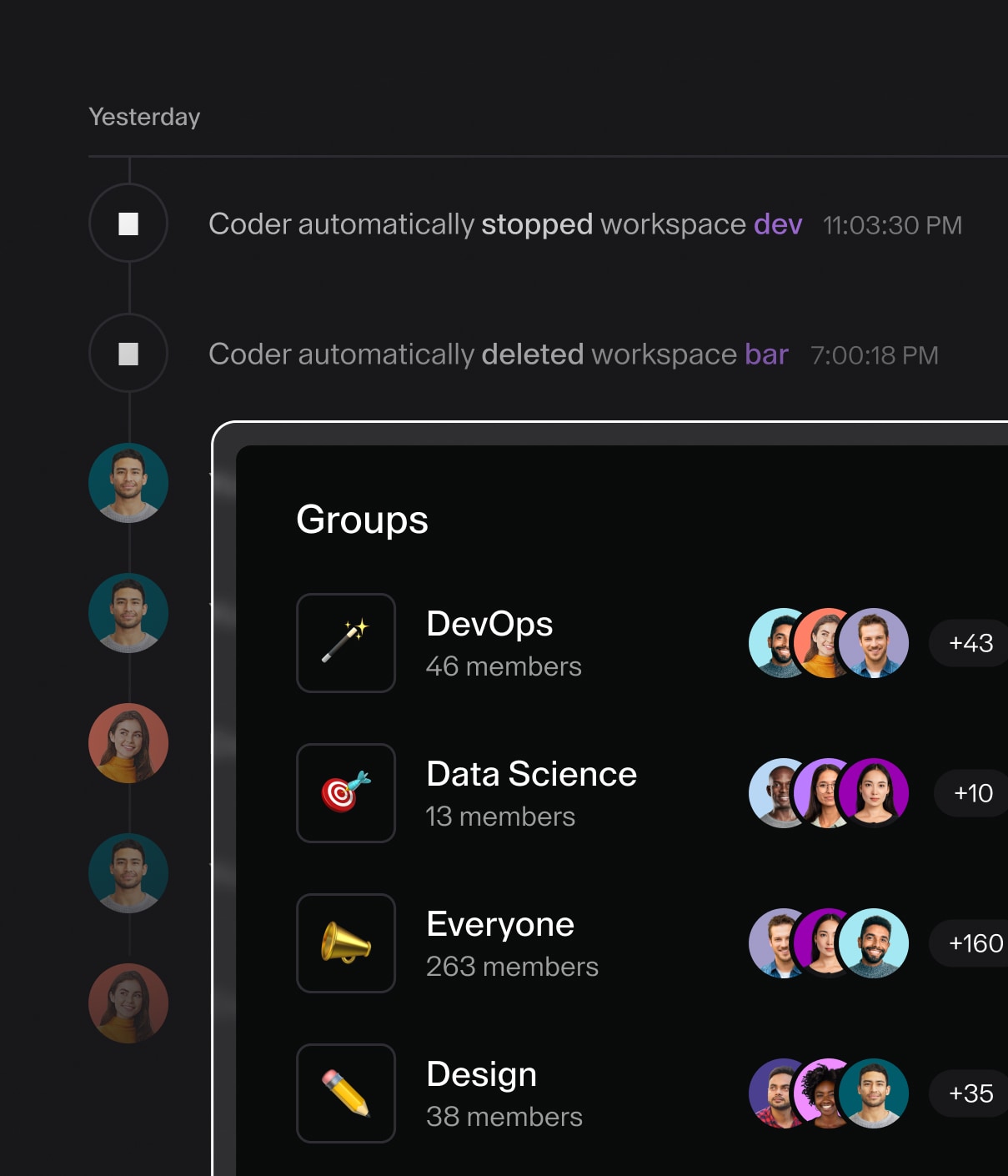

See how to install at https://github.com/coder/coder or reach out on Discord if you have questions. We only charge for enterprise features such as RBAC and audit logs.