Enterprise AI Adoption: What’s Really Holding Companies Back? Insights From a Chicago Executive Roundtable

Enterprises across every industry are racing to understand how AI can reshape their operations, yet many feel unprepared to adopt it responsibly. Recently, I was fortunate enough to attend an executive roundtable in Chicago, leaders from healthcare, finance, technology, and manufacturing shared candid insights into the organizational fears, gaps, and unknowns that are slowing their progress.

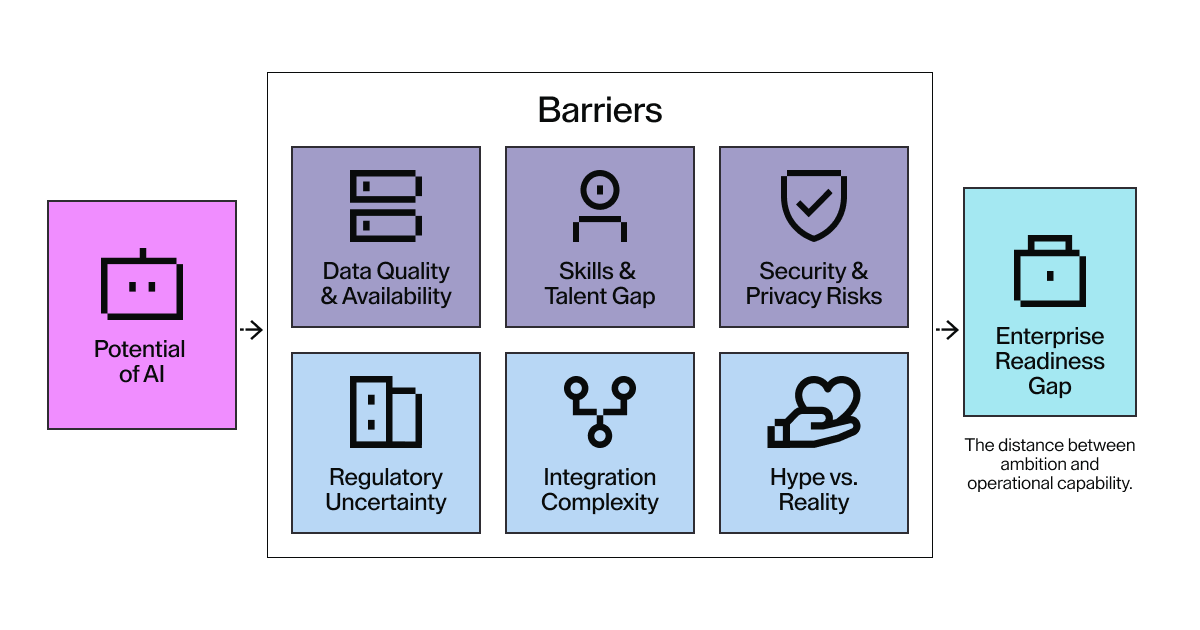

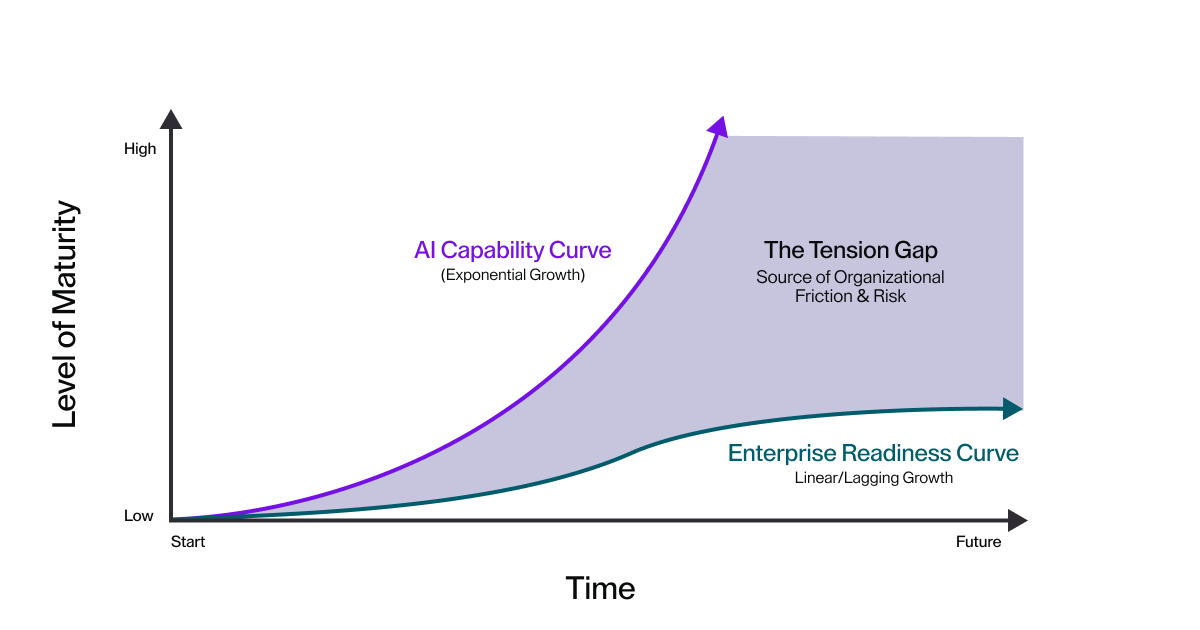

These concerns echo what many AI industry reports have been documenting: the barrier to enterprise AI adoption is no longer the technology—it’s everything surrounding it. In this blog, I will break down the themes from the roundtable, align them with broader industry trends, and provide a grounded understanding of what organizations can do next.

Data compliance, protection, and accuracy still pose the largest risk

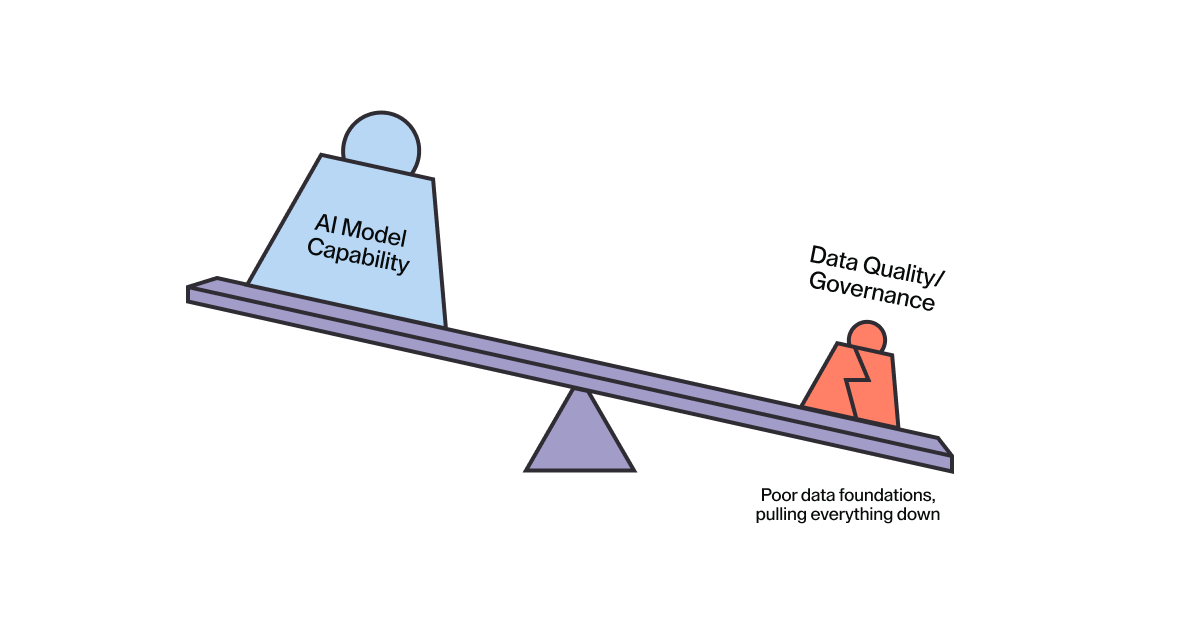

One of the strongest and most consistent concerns raised was the fragility of enterprise data readiness.

What leaders expressed

The data challenge goes deeper than volume or velocity. Leaders described a more fundamental problem: inconsistent data quality across fragmented systems, combined with manual governance processes that can't keep pace with AI's speed. The PEX Report 2025/26 found that 52% of business professionals cited data quality and availability as the biggest barrier to AI adoption—outranking even lack of expertise or regulatory concerns.

Redaction protocols remain manual. Tagging systems vary by department. Compliance checks happen in spreadsheets rather than at the infrastructure level. For regulated industries where a single governance failure can trigger investigations or fines, this creates an impossible choice: move fast with AI and accept compliance risk, or maintain governance standards and watch competitors pull ahead.

The talent gap is real—and slowing down enterprise AI readiness

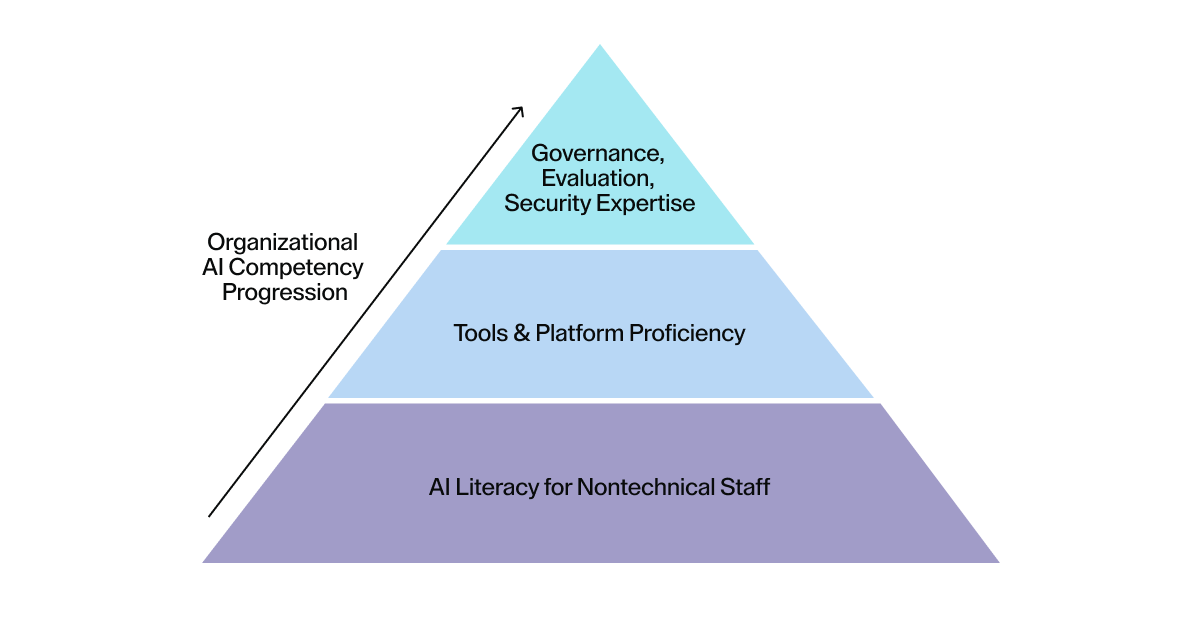

Even organizations eager to adopt AI frequently lack the internal experience needed to deploy, monitor, and scale it safely. The skills gap extends beyond basic AI literacy. Leaders described teams comfortable running experiments in sandboxes but uncertain about the disciplines required for production: AI governance frameworks, security protocols for autonomous agents, and pipeline management at scale.

IBM's research on AI adoption challenges found that 42% of enterprises cite inadequate generative AI expertise as a barrier. The pattern is consistent across research: organizations that invest in structured upskilling programs are 2.5 times more likely to scale AI successfully, according to Deloitte. The gap isn't just technical—it's operational. Enterprises need people who understand how to govern AI in production, not just how to prompt models in a playground.

The hype vs. reality gap creates internal fear and hesitation

Senior leadership teams face a paradox: move too slowly and lose ground to competitors adopting AI faster, or move too quickly and deploy capabilities that aren't production-ready. Leaders described this tension repeatedly—the pressure to demonstrate AI progress while lacking clear frameworks for evaluating which capabilities are actually mature enough for enterprise use.

Stanford's 2024 AI Index documented a widening gap between AI technical capability and organizational trust, revealing that cultural readiness, not technical innovation, has become the primary bottleneck to adoption. The fear extends beyond the executive suite. Developers and engineers expressed concern about displacement, loss of autonomy, and whether AI tools would help or undermine their work. When trust is uncertain and maturity benchmarks are unclear, the safest response becomes inaction—which only widens the gap between enterprises experimenting cautiously and competitors shipping AI-powered features to production.

Integration with third parties raises concerns around trust and complexity

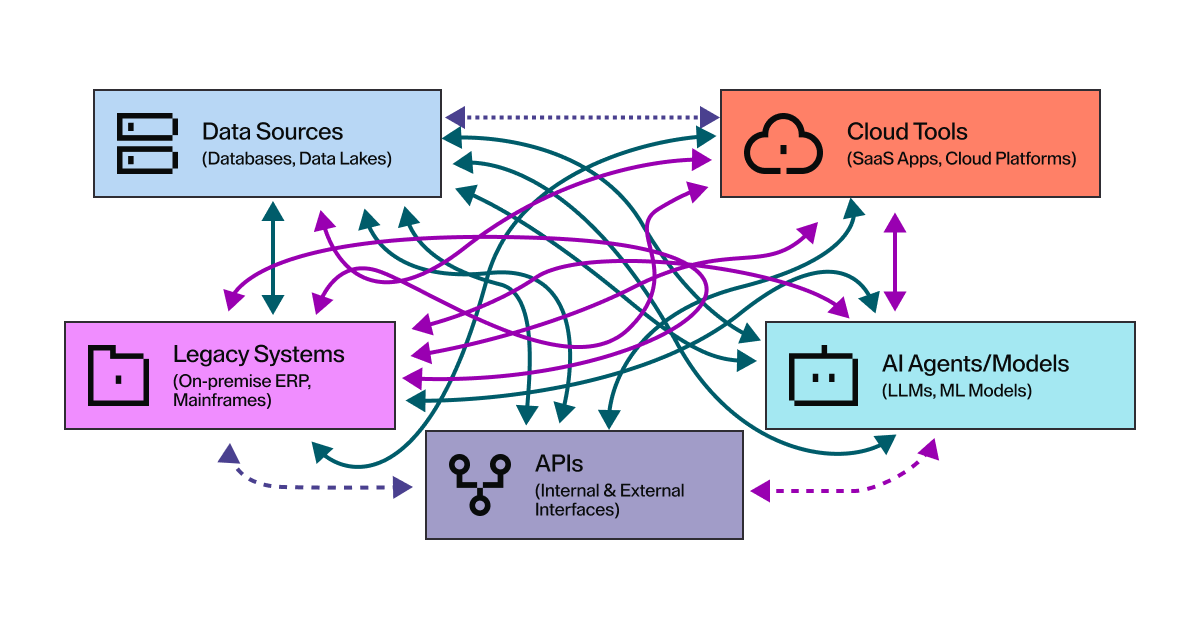

Enterprise ecosystems rarely operate in isolation, and AI introduces integration challenges that traditional systems weren't designed to handle.

Leaders described the friction immediately: connecting AI systems to legacy tools, existing cloud platforms, and established security perimeters creates architectural complexity that stalls deployments. The technical challenge compounds the trust problem. When AI models operate as black boxes, executives struggle to answer basic governance questions: who is accountable when an agent makes a decision, how do you explain an AI-generated outcome to regulators, and what happens when model behavior changes after an update?

Forrester identifies integration complexity as one of the most underestimated aspects of AI deployment, frequently resulting in implementation delays and increased security exposure as teams bolt AI onto systems that lack the right controls. The pattern repeats across industries: AI projects that ignore integration realities during planning face retrofit costs and security gaps that could have been avoided with infrastructure designed for both human and AI workflows from the start.

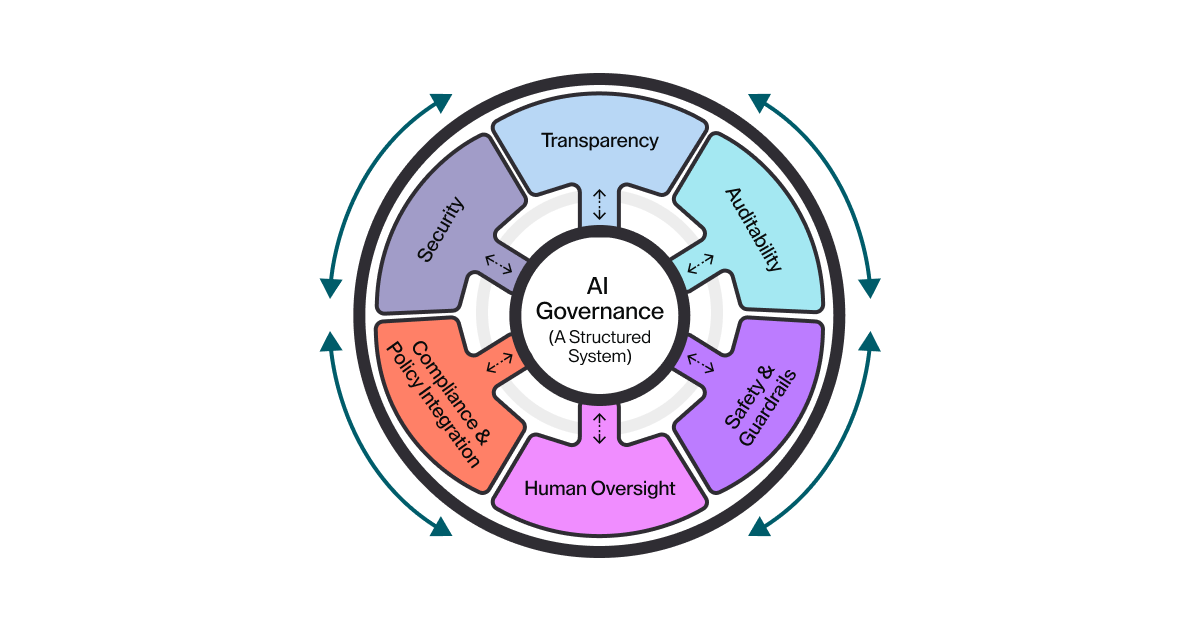

Regulation, guardrails, and governance continue to slow deployment

Organizations want AI that understands compliance—not AI they must constantly police.

Leaders described the operational burden clearly: establishing internal AI governance frameworks while regulatory requirements remain in flux. They know governance matters. The challenge is building the actual mechanisms that enforce it.

What teams struggle with are things like defining safe operating boundaries for AI agents that can autonomously execute code, access APIs, and make decisions that affect production systems. What does "safe" mean when an agent can work for hours without human intervention? How do you prove to auditors that AI workflows meet compliance requirements? The regulatory landscape compounds the urgency as frameworks like the EU AI Act (2024) are introduced.

The market is already responding to this need. Gartner predicts that by 2027, three out of four AI platforms will include built-in tools for responsible AI and strong oversight, with companies that lead in ethics, governance, and compliance gaining a major competitive edge. The timeline is clear: enterprises that lack infrastructure-level governance controls will find themselves retrofitting compliance onto AI systems that were never designed for auditability—a costly and risky path that could have been avoided by choosing the right foundation from the start.

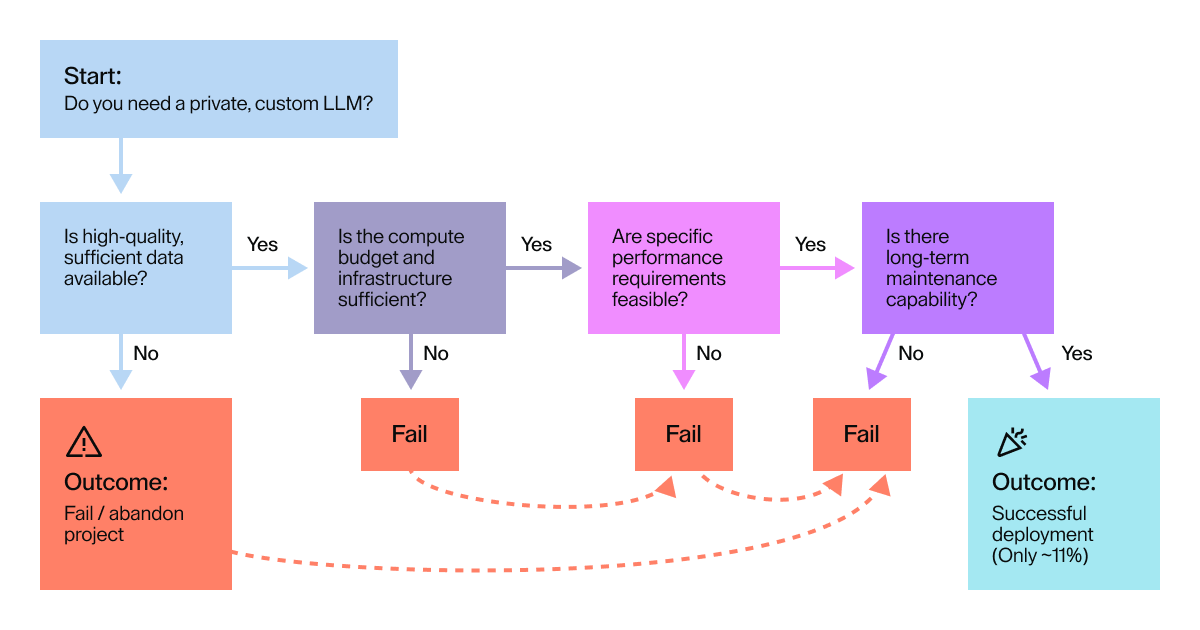

Private LLMs sound attractive—but are rarely production ready

Many organizations expressed interest in building private or domain-specific AI models, but few feel ready to take on the operational complexity.

The appeal is obvious: complete control over training data, model behavior, and intellectual property. The reality proves harder. Leaders described three compounding barriers: the cost and infrastructure demands of hosting private LLMs, uncertain confidence in their internal ML workflows, and the ongoing burden of lifecycle maintenance as models require updates, versioning, and performance monitoring.

The adoption gap tells the story. Menlo Ventures reports that only 13% of enterprise AI workloads use open-source models, down from 19% six months earlier, as the performance gap between open-source and frontier models, combined with deployment complexity, pushes enterprises toward managed solutions instead. The organizations that do succeed with private deployment share a common pattern: they build on infrastructure that already handles complex workloads, supports GPU provisioning, and provides the governance layer that makes ML pipelines auditable.

This foundation becomes critical as AI tools evolve. Teams that start with governed infrastructure can adopt state-of-the-art capabilities like autonomous coding agents without rebuilding their entire stack—a new use case we documented in our blog, Every Cursor Needs a Coder. Without that foundation, private LLM projects become science experiments that consume budget without ever reaching the developers who need them.

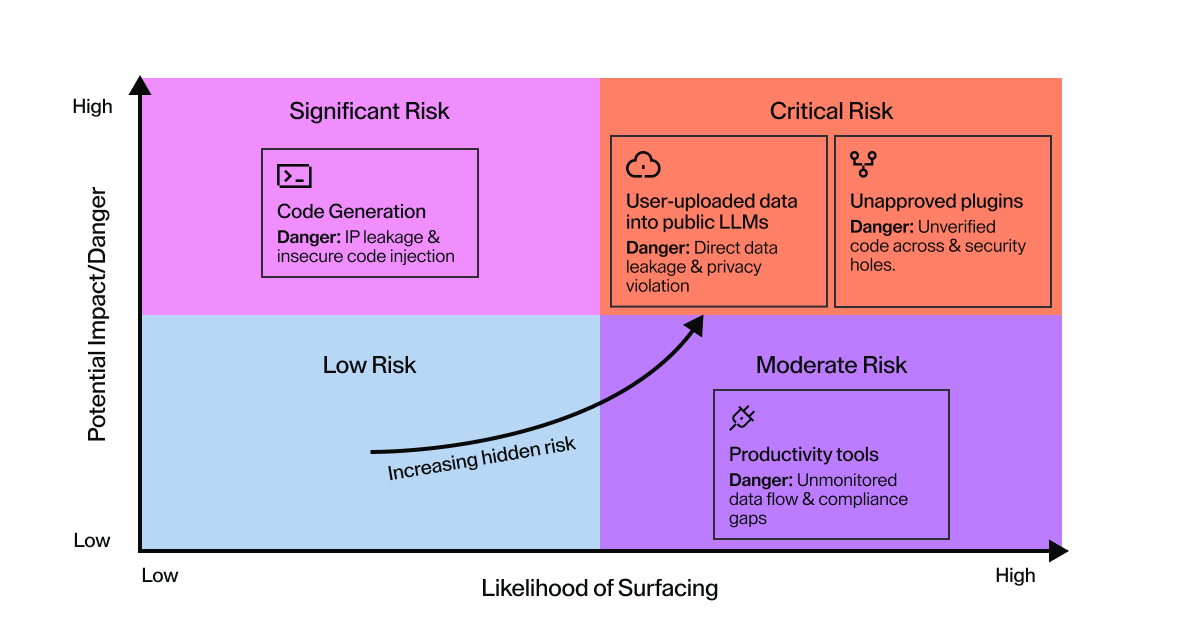

Security gaps and shadow AI are growing fast

The rise of "Shadow AI"—employees using unapproved AI tools—was a concern shared across nearly every industry represented.

Leaders described limited visibility into which AI tools teams are actually using, creating blind spots for data leakage and compliance violations. The concern intensifies when AI agents operate autonomously. An individual developer using ChatGPT to debug code creates one risk profile. An unsanctioned AI agent running overnight with access to production systems, customer data, and internal APIs creates another entirely.

The scale of shadow AI adoption is remarkable. MIT's State of AI in Business 2025 Report found that while only 40% of companies purchased official LLM subscriptions, workers from over 90% of surveyed companies reported regular use of personal AI tools for work tasks. The pattern is predictable: developers adopt tools that make them more productive, IT discovers the adoption months later, and security teams scramble to assess what data may have been exposed. The fundamental problem isn't the tools themselves—it's where AI agents run and what they can access. Enterprises that lack infrastructure-level controls over AI execution find themselves trying to govern through policy and training alone, a strategy that consistently fails when developers face pressure to ship faster.

Ensuring effective human–AI collaboration remains a top priority

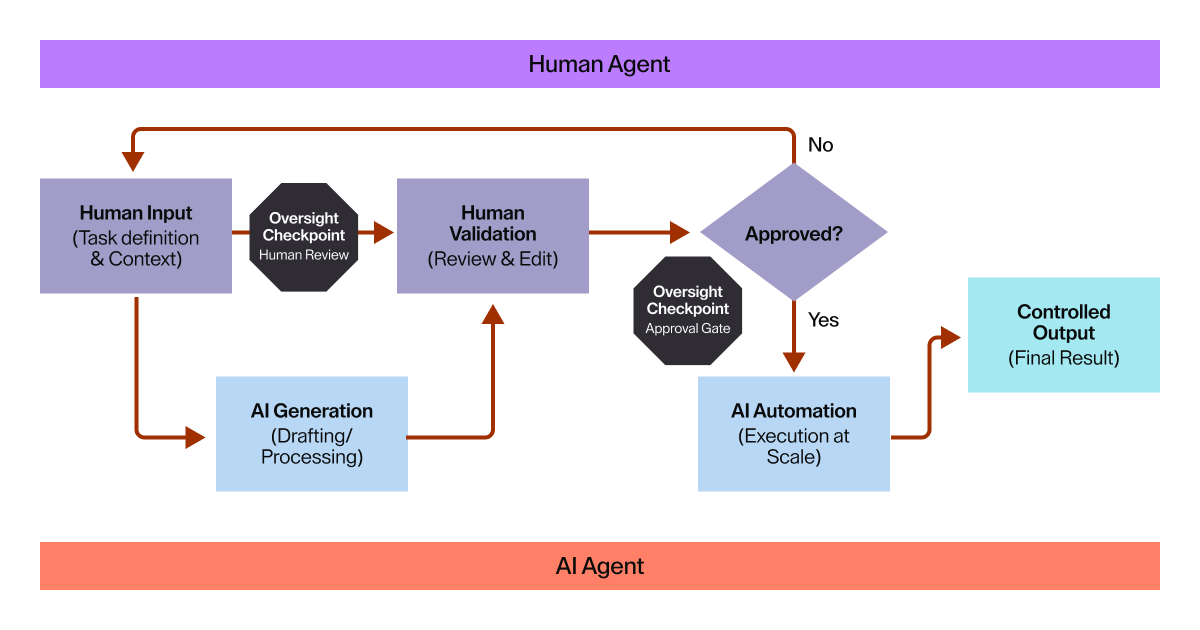

The roundtable discussion made it clear that successful AI adoption depends on clarity of human oversight. Leaders described operational confusion that emerges when roles blur: who reviews an AI agent's work, who approves its decisions, and what happens when an agent bypasses established approval processes? The questions become urgent when AI moves from suggesting code to autonomously opening pull requests, updating documentation, or modifying production configurations.

Without clear workflows defining where human control applies, teams face two bad outcomes: either they over-supervise and eliminate AI's productivity gains, or they under-supervise and discover problems only after agents have made irreversible decisions. The evidence supports structured oversight. Research on human-in-the-loop systems shows that human oversight reduces false positives by 67% and enhances decision transparency by 43%, demonstrating that the right balance between automation and human judgment drives better outcomes than either extreme.

The pattern holds across industries: enterprises that define explicit handoff points between human and AI work—specifying what agents can do autonomously, what requires review, and what demands approval—achieve both velocity and control. Those that leave these boundaries undefined end up with neither.

Where enterprises go from here: Infrastructure as the answer

The discussions in Chicago reinforce what we're seeing globally: the barrier to AI adoption isn't the technology anymore—it's enterprise readiness. Organizations need stronger data foundations, governance frameworks, skilled teams, smoother integrations, and safe experimentation. What connects these needs is infrastructure.

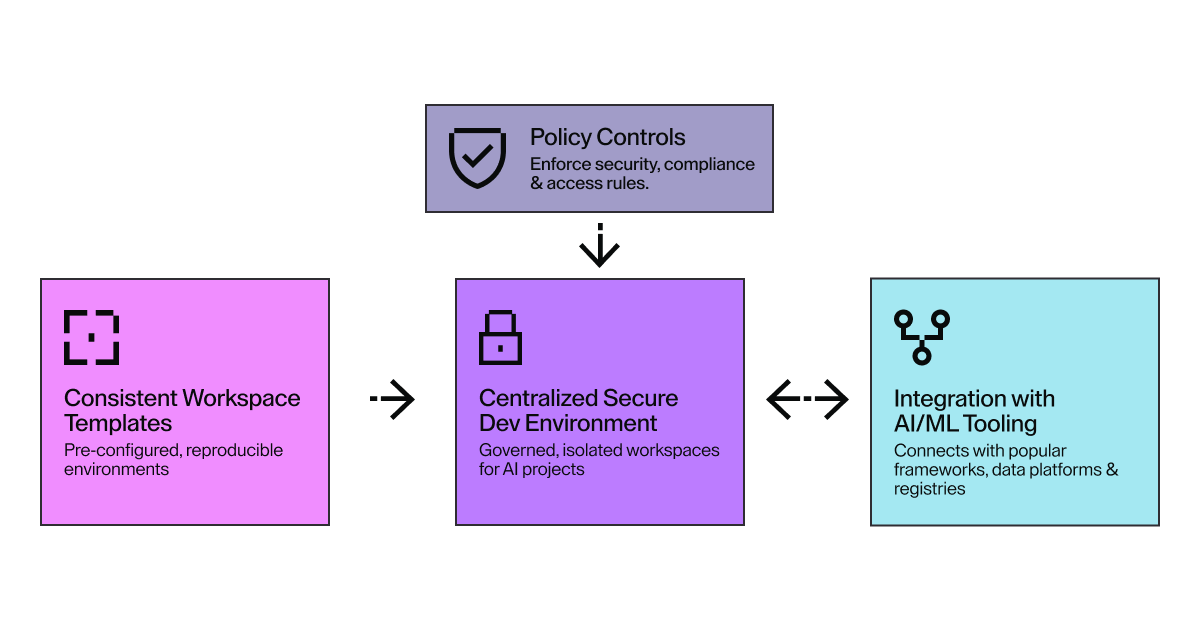

The solution is moving AI execution off laptops and into governed environments. When agents run inside secure, centrally managed infrastructure instead of on individual devices, governance shifts from policy compliance to architectural enforcement. Controls become impossible to bypass because they're built into the layer where work happens.

Coder built three capabilities specifically to address these enterprise barriers at the infrastructure level.

AI Bridge provides the centralized governance layer that eliminates shadow AI. Every model interaction—whether from a developer using Cursor or an autonomous agent running overnight—flows through a single control point that handles authentication, logging, and cost tracking. Platform teams gain complete visibility into AI usage across the organization. Security teams get the audit trails that regulators actually trust. Finance teams see exactly where AI spend is going. The result is that developers can use the AI tools they need while enterprises maintain the oversight they require.

Agent Boundaries solves the "what can this agent access?" problem through infrastructure-level network controls. Rather than trusting prompts or hoping agents behave safely, Boundaries enforces deterministic rules about which domains, APIs, and services agents can reach. An agent working on internal tooling might have full access to GitHub and Jira but zero access to the public internet. Another handling customer data might reach specific approved endpoints while everything else gets blocked by default. When prompt injection attempts or malicious instructions try to exfiltrate data, Boundaries stops them before damage occurs.

Coder Tasks enables the long-running, autonomous execution that enterprises need without sacrificing governance. AI agents can work for hours on complex workflows—running test suites, generating documentation, refactoring legacy code—while maintaining full auditability. Platform teams define resource limits, timeout policies, and approval gates. Developers can inspect progress, intervene when needed, and review results before anything reaches production. The work happens in the same governed environments where human developers operate, making handoffs seamless: a human can open the exact workspace an agent used and continue where it left off, or an agent can pick up work a developer started.

Together, these capabilities provide what enterprises need: infrastructure that makes AI safe to adopt at scale. Teams that start with governed environments can experiment with autonomous agents without creating compliance gaps. They can scale from pilots to production without retrofitting governance onto systems that were never designed for it. The risks don't disappear, but infrastructure moves them from inevitable to manageable.

Ready to see how governed infrastructure changes AI adoption? Join thousands of platform engineers in the Coder Discord community to discuss real-world AI governance challenges and solutions. Or get hands-on: download Coder's open-source tools and build your first agent-ready workspace in minutes. The foundation you build today determines what AI capabilities you can safely deploy tomorrow.

Subscribe to our newsletter

Want to stay up to date on all things Coder? Subscribe to our monthly newsletter for the latest articles, workshops, events, and announcements.